In my previous posts “What is Video Streaming” and “Video Streaming: Reducing Stalls with Adaptive Bitrate” I introduced how video streaming works, and how Adaptive Bitrate helps the video adapt to the device and the network conditions to improve video streaming. In this post, I’m going to dig deeper into Adaptive Bitrate, and look at what data the player uses to request video segments that are played.

There are 2 major adaptive bitrate protocols: HLS and MPEG-DASH. HLS (HTTP Live Streaming) was implemented by Apple, and is currently the predominant streaming method. MPEG-DASH (Dynamic Adaptive Streaming over HTTP) is the international standard for streaming. Currently, most streams are HLS, but MPEG DASH is expected to become the main streaming solution in the coming years.

In this post, I’ll break down how HLS adaptive bitrate works, and walk through how to do an analysis of HLS video in AT&T’s Video Optimizer. I’ll focus on MPEG-DASH in a future post.

HLS Streaming: The Manifest File

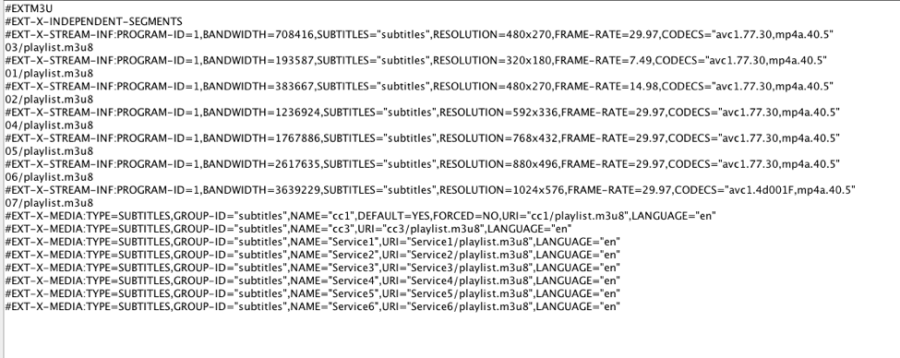

When a HLS video stream is initiated, the first file to download is the manifest. This file has the extension M3U8, and provides the video player with information about the various bitrates available for streaming. It can also contain information about audio files (if audio is delivered separately from the video) and closed captioning. Let’s take a look at what the HLS manifest file looks like:

Ok, so what is this file telling you? Let’s break it down in to sections. The first ~12 lines are breaking down the bitrates available for this video. If we look at lines 3&4, we see the first bitrate stream listed in the document (the first stream has an importance we will discuss later):

#EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=708416,SUBTITLES=”subtitles”,RESOLUTION=480×270,FRAME-RATE=29.97,CODECS=”avc1.77.30,mp4a.40.5″

03/playlist.m3u8

As we read across the top line, we see descriptions about this stream: the bitrate (noted in bytes – so ~ 708 KBPS), subtitles, resolution ( 480×270), framerate (30 frames per second) and the codecs (in the format “video,audio”). The 2nd line has a pointer to the manifest for this bitrate (think of this as a ‘sub-manifest’). Note the leading “03” in the sub-manifest. This is denoting the specific stream that will be played.

We could continue the analysis for each subsequent set of lines, but it might be easier to extract the data into a table:

The first column lists the leading identifier in the sub-manifest url, while the others are from the description. Examining the ID shows that the first bitrate appears out of order, and there is a good reason for this.

The Leading Segment

When a video begins playing, the player has no information about the available throughput of the network. To simplify things, HLS automatically downloads the first video quality in the manifest. If the stream provider chose to list the IDs in order, every viewer would get the lowest quality stream to start. Clearly, this is not ideal. Listing the files in reverse order (highest bitrate first) would send the highest quality video to start – which might lead to long delays and abandonment on slower networks. For this reason, streaming providers typically balance startup speed and video quality by picking a “middle of the road” starting stream.

Additional Manifest Data

The manifest also has information with links to the subtitles available, and if they should be on by default, and the languages available. In this case, the language is “en” (English).

Sub Manifest

When the player requests quality 3 – there is a link to a second manifest file: “03/playlist.m3u8” This file lists the segments of video = (and the urls for these segments):

In HLS, files with the extension .ts are the video segments. This manifest is giving is the next 6 segments to download at quality 3. If we look at the segment names, they are in the format “date” T “time.” The first segment is 20170921T205231392, suggesting September 21, 2017 at 8:52:31.392 PM. Because I know when I captured this data, I know that the time is reported in GMT.

Closed Captioning Manifest

In the main manifest, there was also a m3u8 file for closed captioning. It looks very similar to the other sub-manifest file, but it points to a list of “.vtt” files – the format used for closed captioning.

Video Download and Playback

The main manifest points the player to sub-manifest files that subsequently direct to the files required to play the video. The player downloads the files required, and the video starts playing.

At the same time, the player monitors the observed throughput of the network. If the measured throughput shows an opportunity to increase the video quality (or in the opposite case – there is a need to decrease the quality to continue the stream), the player may request another “sub-manifest” for a different stream quality. It requests the new m3u8 “sub-manifest” and begins streaming at the new quality level.

Putting it All Together

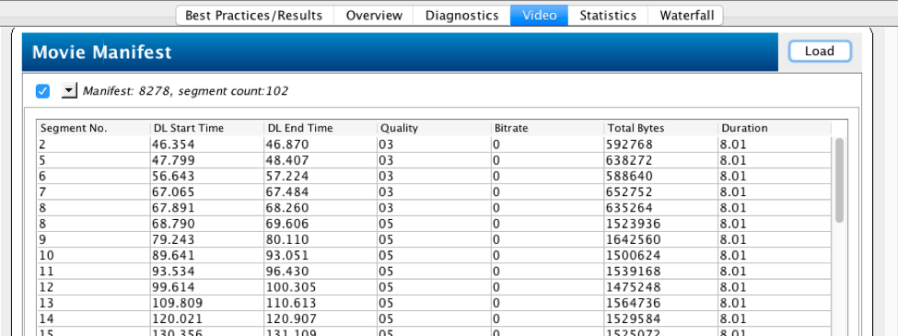

Let’s walk through this example using Video Optimizer. I began by collecting a network trace of a popular video streaming application. When I opened the trace, Video Optimizer automatically identified each video segment, and listed them in the Video tab (if this does not automatically occur, we have a Video Parsing Wizard that can help):

This table shows me which video files were downloaded for the manifest in question. This was a live stream, and while segment 2 was downloaded, playback actually began at #5. Segment 5 was downloaded at quality 3 (as directed by the manifest file shown above). Segment 8 was downloaded at quality 3, but also at quality 5. At this point, the player had measured the network throughput, and decided that it could increase the video quality for the viewer. We can see the video continued at quality 5 for the rest of the stream.

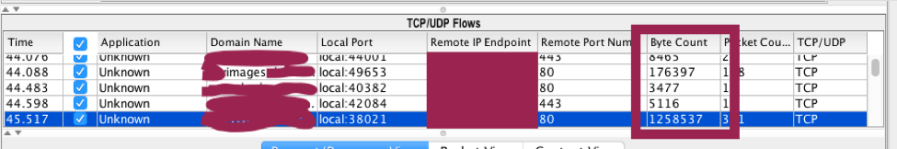

To find the manifest files in the trace, we need to look at the connections around the time the video started downloading (around 46s) for the initial M3u8 files. If we look around 68s, we will discover the quality 5 sub-manifest. Switching to the diagnostic tab, I look for TCP connections around 46s that use a lot of data (since video files use a lot of data :)).

Focusing on the Byte Count row, I see TCP flows transferring ~176KB and ~1.25 MB. I’ve obfuscated the IP addresses and domain names, but I immediately rule out the 176KB connection, because the domain contains a “images” subdomain. When I look at the files transferred, they are image files. The connection with 1.25 MB of transferred data contains the manifest and video files, as seen in the request/response view:

In the yellow box, you can see that the files requested are m3u8 manifest files (latest.m3u8 and playlist.m3u8), and the responses are small – typical of a small text file.

In the green box, these files are much larger: these are the video segments beginning to download.

Conclusion

In in this post, we walk through how HLS Adaptive Bitrate streaming uses manifest files to supply the files required for streaming. We show how these requests are made and how to interpret these files. We also walk through the steps to perform this analysis in AT&T Video Optimizer.

3 thoughts on “How HLS Adaptive Bitrate Works”